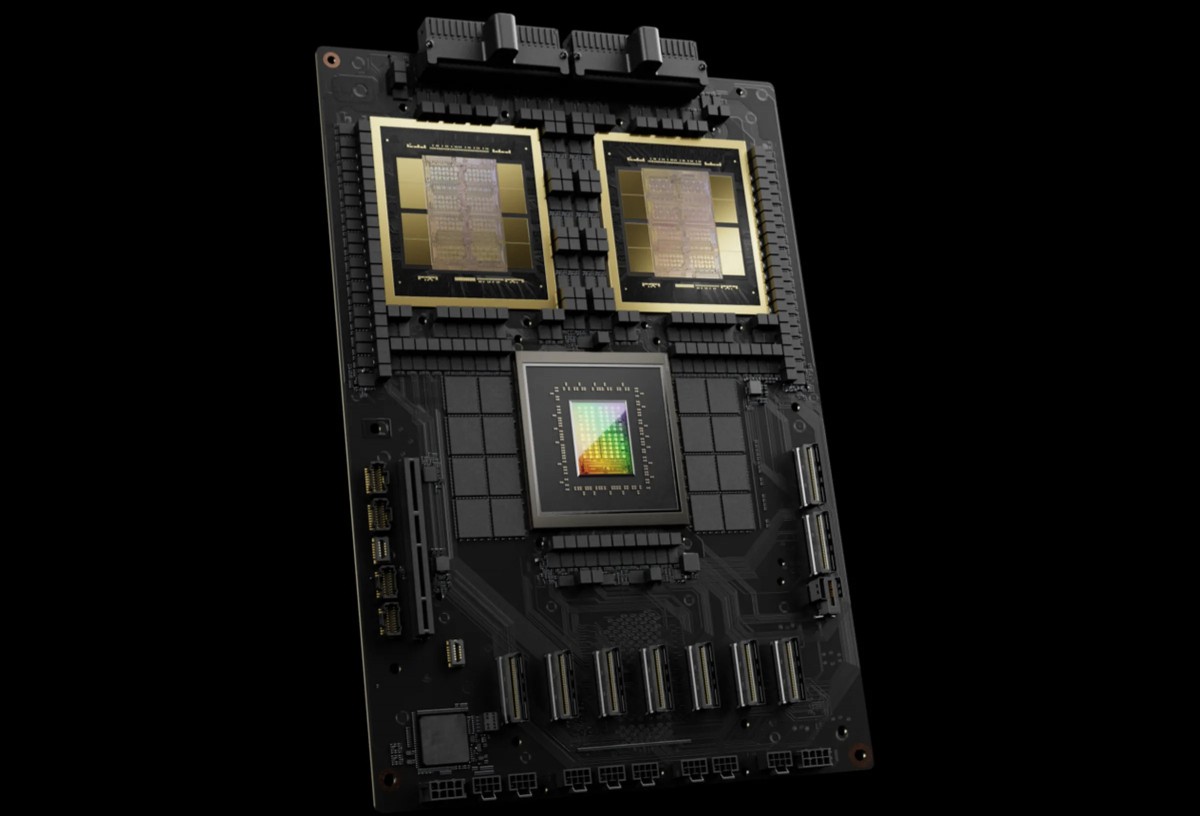

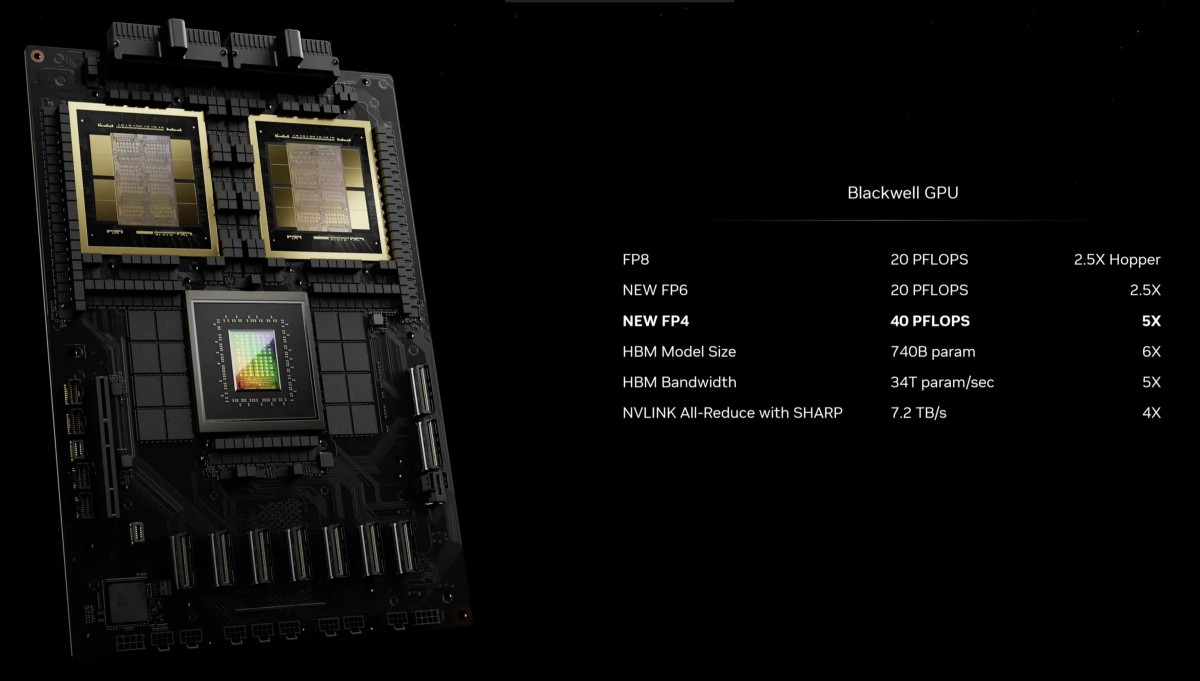

At the GPU Technology Conference, Nvidia announced the launch of the world’s most powerful AI-related computing chip GB200, powering the Blackwell B200 GPU. It is the successor to the H100 AI chip and offers huge improvements in performance and efficiency.

Thanks to the 208 billion transistors within the chip, the new B200 GPU is capable of 20 petaflops of FP4. In addition, GB200 performs 30 times better than H100 in LLM inference workloads while consuming 25 times less energy. GB200 is also seven times faster than H100 on the GPT-3 LLM benchmark.

For example, training a model with 1.8 trillion parameters requires 8,000 Hopper GPUs and about 15 megawatts, whereas a set of 2,000 Blackwell GPUs can do it with just 4 megawatts.

To further increase efficiency, Nvidia designed a new network switch chip with 50 billion transistors that can handle 576 GPUs and allow them to communicate with each other at 1.8 TB/s bidirectional bandwidth.

In this way, Nvidia solved the previous communication problem, where a system with 16 GPUs would spend 60% of the time communicating and 40% computing.

Nvidia says it is providing complete solutions for enterprises. For example, the GB200 NVL72 allows for 36 CPUs and 72 GPUs in a single liquid-cooled rack. The DGX Superpod of the DGX GB200, on the other hand, combines eight of these systems into one, containing 288 CPUs and 576 GPUs with 240TB of memory.

Companies such as Oracle, Amazon, Google and Microsoft have shared plans to integrate NVL72 racks into their cloud services.

The GPU architecture used by the Blackwell B200 GPU may become the basis for the upcoming RTX 5000 series.

source